In the beginning, there was no documentation. These days, infrastructure software has terrible documentation. Take heed of these commandments, for, among hackers, judgment day is every day.

There is a distributed systems revolution underway. Cloud computing and mobile systems are now ubiquitous, and web services are exploding (BTW, I firmly believe that we have barely scratched the surface. There are so many more exciting services to build that we will see decades of growth and excitement in computer science). This trend has not only turned almost every regular developer into a distributed systems developer, but also pointed out just how little infrastructure we currently have for building distributed systems. As a result, the last decade of systems research as well as industry development has been marked by a worldwide effort to come up with frameworks, components, and other underlying systems infrastructure. Such systems infrastructure software includes web frameworks, NoSQL systems, databases, recommendation engines, lock managers, replication packages, analytics engines, machine learning packages, and so forth. All of this infrastructure needs to be documented in order to be useful. This post is about how to document such systems software.

Documenting systems software is qualitatively different from documenting consumer software. Whereas consumer software documentation needs to be task-oriented, documentation for systems software needs to be contract-oriented. If you're a developer or user of systems software, this post provides some principles that might help you write, and demand, better documentation.

This is not a post about how (not) to write internal documentation, e.g. of the form:

++i; /* add one to i */Internal documentation, whether it is in comments embedded in code or in white papers, is for your own consumption. Make it as terse or as verbose as you like; it's your own business. I do have strong opinions on how it needs to be written for my own projects, but they're beyond scope here. This manifesto is about user manuals and web pages that are released along with systems software, that is, companion text to code intended for public consumption. Having read a lot of such text in the NoSQL and database space, I want to call for better practices across the industry.

Documenting systems software is qualitatively different from documenting other kinds of consumer software because the users have a different kind of relationship with the software. With consumer software, the target is the end-user who will carry out a task, and the documentation has to center around questions of the form "how do I use this software to do something?" We've all wondered about how to use Photoshop for red-eye reduction (answer: there are more than a dozen ways, but they either produce terrible results or require you to figure out alpha channels and color theory), how to use Office to generate form letters (answer: I hate to admit that I once had to do this and carried it out with Clippy's help but cannot any longer), or how to use GIMP for, well, anything (answer: it's just like Photoshop and insanely more difficult). The central point here is that consumer documentation needs to be mechanistic and illustrative, because the target audience is task oriented.

In contrast, the target of documentation for systems infrastructure is another developer. Systems documentation is there to help facilitate the development of other software. To do that effectively, there needs to be a contract between the developer, who is using the system, and the system, which is providing the functionality that the developer needs. The documentation serves as that contract. It needs to be clear, declarative and comprehensive. This article will make the case that writing such a contract should be treated as writing a software specification.

Time for holy documentation.

What I've enunciated so far is, from an academic standpoint, a very orthodox approach. Since the '60s, academics have argued for formal specifications for software. Such specs would be fantastic: they would take out any and all ambiguity of English; enable one to check the implementation against its spec, eliminating all bugs save for the ones in specs themselves; facilitate reasoning about the composition of different modules; among others.

But it turns out that writing formal specifications for software is such a difficult religion to follow that few academics follow the orthodox teachings themselves. Instead, all but a few dedicated academics appear at the high altar of formal specification only for occasional talks and specially crafted (and, invariably, very short) examples. Most academic software is documented in exactly the same manner that most industry software is documented: ad hoc English text.

I, too, will deliberately break with the orthodox view: I will not issue an idealistic but non-realizable call for formal specifications. The orthodox religion is too difficult to follow for all but the most dedicated. And sadly, there is little in between the orthodox approach and a life of hedonistic, heathen practices where pretty much anything goes as far as documentation.

In this manifesto, I want to enunciate some principles for structuring that ad hoc English text so it can help facilitate more robust systems. I realize the religious nature of this discussion, so I'll try to remain true to form and plagiarize heavily from previous books that successfully documented the human-heavens API in the past.

Because every breakaway movement needs a clear identifying name, we shall call our splinter movement Principled Documentation. Chances are, if you're reading this, you already are a disciple; there are many who practice it without realizing it. If you don't, I hope you'll convert and zealously preach that others do the same. The goal of this post is to enunciate our principles clearly so others can follow them. For no splinter religion would be worth its salt if it did not try to convert everyone else on earth.

If you think you and you alone (or people like you) own certain words, phrases, references, or sentence structure because of your belief system, here's a fair warning: while I am sure that there is absolutely nothing here that is blasphemous, objectionable to a hacker, or offensive to a thinking individual, you should probably read something else.

The central commandments of the Principled Documentation movement are as follows:

There is only one audience and their name is Developers.

It's critical to choose your audience with care and to write solely for that audience. The developers who will be using your system are drastically different from just about everyone else, especially the people in suits who make purchasing decisions. You can have as much marketing material as your budget permits, but you should not confuse such material with system documentation, or vice versa. Mixing the two heralds the end times, like breaking down the barriers that hold back Yajuj and Majuj, Gog and Magog.

Thou shalt view thy documentation as a specification instead of a description.

The documentation forms the contract between a system and its users. Like any good contract, system documentation must specify what both parties expect from each other and can count on in return. This requires describing what software needs, what it does, and what it provides -- the three angels that fit on the head of a pin, assuming that the pin also holds some RAM, for they typically need to appear in a PDF file or web server. A holy book that simply provides a description of, say, the various machinations among the gods on Mt. Olympus, is of little utility. The successful books have a prescriptive specification for what their users ought to do. Phrases such as "thou shalt," "thou shalt not," "we shall provide," "we guarantee" and "surely, you can count on" are part and parcel, not just of a religious manifesto such as this one, but every holy book.

Thou shalt specify thy assumptions.

First, the docs must specify all the contractual obligations that the users must fulfill to use the system correctly. That is, all assumptions of the system about the inputs must appear in the documentation. Does an input array need to be sorted? Must a netmask parameter be large enough to accommodate the number of hosts passed in another parameter? Should the caller be holding a special lock when calling that routine? Perhaps a call to a function needs to be bracketed in some special way or else it will never work and all the multithreaded code in the world that uses it will be broken? The documents must enunciate these preconditions. If it's not in the docs, the system developers do not get to blame their users.

Thou shalt specify thy functionality.

Second, the docs need to spell out the functionality that the code carries out. This typically comes naturally to most seasoned programmers. For instance, this is a good specification: "given an unsorted array, this function returns the median." So is "this function will issue an eventual update to the database." A good rule of thumb is that every such specification should have a verb in it.

Strange as it may seem, it is possible to find documentation, issued by large companies even, where the API description gets hung up on all kinds of irrelevant details without concisely specifying what it is that the API does. This kind of oversight marks the footsteps of Beelzebub, for how can the code be correct if there is no indication as to what it does? Worse, how can it even be incorrect?

If the documentation does not actually say "here is what this function promises to do," then it could be replaced with a NOP, or the universal answer 42. In fact, there is plenty of NoSQL documentation out there that is so non-specific that it does not say what the "read data from database" function will or will not return. This is a clear sign that at least the docs, and perhaps the project, have failed. It's just a matter of time before a bright developer, having improved benchmark scores by considering a write finished before it has been written anywhere, will want to improve the read path by returning 42. Such a database is, after all, eventually consistent.

You had one job, one invariant: "Do not touch the apple, and you stay in Eden." But it wasn't written down, and we all know how that turned out.

Thou shalt honor thy invariants.

Finally, the docs need to specify the guarantees the code provides and the invariants it upholds. This clearly telegraphs to the developer additional facts about the universe she can count on. So if your code always ensures that, say, there will be at most N outstanding operations written to a journal, or that journalled operations will spend at most tau seconds before being committed to the main store, the documents need to spell this out (I have taken it as a given here that the concept of a journal is something your system must expose to the user; its exposition actually violates some of the other commandments). Undoubtedly, the code went to great lengths to maintain that invariant. Incorporate it into the contract to make it useful. Say it out loud and proud, then make sure your code lives up to it. Perhaps one can build additional functionality, such as a consistent backup mechanism, based on this invariant.

Conversely, if the docs leave out an invariant, clear-thinking users must assume that the software will fail at maintaining that invariant, probably at the most precarious moment when the going gets tough. If an invariant is not in the contract, it's just happenstance. Only a fool would count on it.

What is unacceptable is to imply an invariant without actually having the conviction to say so openly. Does a database ensure that an object is resilient against F faults? If so, the documentation needs to clearly say this aloud. For instance, no one knows if some very popular NoSQL stores actually offer a fault-tolerance guarantee. Certainly, there is no shortage of implications that they do, and certainly, all the language points to it, but the documentation is often, at best, mercurial.

Being clear about your invariants in your documentation has the crucial side-effect of keeping them front and center in the developers' minds, so later modifications to the code base will continue to maintain the same invariants. Documentation that implies fancy, desirable features without actually clearly offering them bears the footprints of the great shapeshifter Loki, and Ragnarök is just around the corner when Loki has been unchained.

Thou shalt write complete, self-contained documentation.

The docs constitute the sole and full agreement with the user. They cannot assume that the user has read or will read any and every blog entry, press release and tweet issued by a company. They most certainly cannot depend on information in transient, ephemeral media. Docs are like the newspaper of record: everyone may well have watched OJ slowly run away in a white Bronco on TV, but the documentation needs to clearly describe what happened for posterity.

But it is possible for documentation to incorporate other documents by reference, same as with contracts. So long as these documents are as permanent, uniquely identifiable, and referable as the documentation itself, this is a fine practice. It's perfectly acceptable to say that a compiler implements the ANSI C standard or that a database implements a particular SQL variant, with a reference to the appropriate text. Note the insistence on unambiguous, specific references. What documentation cannot do is incorporate vague notions or collections of utterings from corporate twitter feeds, or else the trickster Nanabush will sneak into applications.

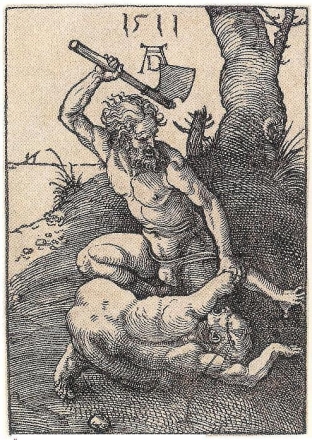

Layered OSI model of hell. The data link layer, with Lucifer, is reserved for mechanistic descriptions.

Thou shalt cover thy naked mechanistic descriptions.

It is a cardinal sin to describe the behavior of a complex piece of software in lieu of its contract. It is a dishonest cop out to explain, even in great detail or especially in great detail, the complex machinations the system performs with data, and then to say "well, we described what we do in great detail, and if you got caught by an edge case and lost data, well, it's your fault for not reading our description carefully enough." This is at best lazy, some might say duplicitous, but in any case not the way smart people conduct their affairs. Dante undoubtedly has a special level of hell dedicated to people who practice it. A plumber who fixes a tap is legally obligated to not leave that house with a leak; he doesn't get to say "well, my routine involves spinning the nut 5 turns no matter what and if it leaks after that, it's your problem." Similarly, a software engineer cannot pre-issue a disclaimer that goes: "well, I developed a weird interpretive dance routine (European high art, NSFW) for your data that looks like this," and thereafter make fun of you when he loses data, saying "it's your own fault! it's not like I geared up to guard Ft. Knox! I was skateboarding in a loincloth all along, and yet you entrusted me with your data." Our current laws do not compel the software engineer to behave better, but engineering pride must.

There are good reasons why smart developers will avoid mechanistic descriptions. The users adopt a component because they do not want to understand the complexities that go into its internals. Butler Lampson observed in the mid-90's, when Sun was making a lot of noise about how Java would herald in a new age of reusable applets and microcomponents, that the metric that determined software reuse was the complexity of the component divided by the complexity of its documentation. So, components that perform complex tasks behind a relatively simple interface provide better value to their adopters than small components with complex descriptions. He boldly predicted that Sun's component reuse model would fail. There is some information in the fact that no Java Beans were involved in serving this page, or, roughly speaking, any other page on earth.

So if a system forces its users to have to understand its internals before it can be used competently, it is in a losing predicament. Let's do an optometrical test. Is the documentation more useful like this:

This code goes through an array pair by adjacent pair, compares each,and swaps the pairs where the latter element is larger thanthe former element, repeating the process until no swaps areperformed on a complete pass through the array.

or this:

Returns a sorted array.

And did you catch the bug in the mechanistic description? Just imagine how much worse the description would have been, and how many little things could have gone wrong, if the implementation had been based on quicksort.

Now imagine a NoSQL store trying to maintain consistency and fault-tolerance invariants, which it cannot even articulate concisely, by describing its own operation from the ground up. Shouldn't it just offer an API, perhaps named safe_write, whose description is that data written with it can be retrieved after a failure? Of course, the tricky extra credit question for advanced players is: what if the same data store provides 5 different kinds of SAFE writes, each with a complex mechanistic description -- which one is the proper safe write you want when you don't want your data lost? Every time a user reads the manual for a NoSQL system and is forced to go through a gory description of various-things-they-do-to-your-data-which-you-must-master-or-else-they-will-lose-your-data-haha-just-kidding-they-are-going-to-lose-your-data-anyway-even-after-you-mastered-their-inner-workings-because-they're-broken-by-design, she thinks "Cool story, bro, what with all those implementation details and everything. Now give me an actionable guarantee."

A side effect of principled documentation is that it creates manoeuvering room to change the implementation later. You want quick sort instead of bubble sort? No problem. The documentation doesn't need to change.

Yamantaka gets upset if you make up new terms for old concepts.

Thou shalt use existing, well-known terminology and concepts wherever possible.

It's not easy expressing all the invariants that complex software maintains. If I had to describe everything that HyperDex does to ensure fault-tolerance in a mechanistic fashion, it would easily take dozens of pages of English text. But luckily, we do not exist in a vacuum -- we all have a shared geeky background, with well-defined terms that are so well-known that even academics have gotten to know them intimately and teach them to new generations of programmers.

So, documentation must take advantage of this common heritage by using concise, well-understood terminology. Thanks to the work of dozens of database and systems researchers, I can specify HyperDex's guarantees for NoSQL transactions very simply, like this: it provides one-copy serializability and ACID guarantees for transactions. There, anyone who is familiar with the area can immediately build services on top, without me having to describe, and them having to master, anything about the internal implementation. An inability to use existing terms for existing concepts demonstrates that the developers are not familiar with the topic, that what is elementary to others was new material to them, which is fine, but it often indicates that they may not have thought about all the well-known edge cases. As a certain mystic poet put it, they are like fish in water, who know not of the sea.

A corollary to this is that documentation should not invent new terms unless necessary to clearly mark, and refer back, to new concepts introduced uniquely in that system. Minor tweaks to known concepts, or combinations of two well-known concepts, do not get new names. For instance, no one, except perhaps Dilbert's boss, is impressed by the mumbo jumbo names NoSQL developers have invented for age old concepts such as delayed writes, quorums and gossip protocols. We're not at the weekend SCA meeting, these devs are not Prince Bromir, son of Nohsiquel, and they do not need to sound medieval or learned. My anthro colleagues would call the made-up 4-word dual-hyphenated special phrases "in-group identification" and point out how it's primarily used for exclusion. He who controls the language frames the debate, and keeps out critics who use standard terms everyone can understand. And sometimes, groups of developers will develop their own language, repeating each others' non-sensical words in the blogosphere, like unsupervised twins inventing their own lingo. "Water," the mother points out. But the twins spend much more time with each other reinforcing their words than they do with the mother. "Gaga" twin #1 calls it. "Gaga" twin #2 repeats. So gaga it is. Or perhaps it is "Mongo.WriteConcern," and no one can remember if the original concept was "fault-tolerance" or "durability" or "consistence."

There is a lost panel, and the eighth cardinal sin, reserved for bad documentation writers.

Thou shalt not covet existing terminology and assign it new meaning.

A related cardinal sin in this space is to co-opt existing terms, but to slightly re-define them. The more subtle the redefinition, the more possessed the documentation.

I once reviewed an academic paper which was evaluating a particular approach to routing and wanted to compare their routes to the optimal path possible. Optimal paths are NP-hard to compute, so they didn't compute them; the authors picked a good path using a simple heuristic and called it OPTIMAL throughout their write up. The peer review process pointed this out, and instead of fixing this oversight, the authors just dropped a sentence into the introduction of their paper that says "In the remainder of this paper, the word optimal refers to heuristically determined paths, which may not necessarily be optimal." Their conclusions section argued that their idea was successful because the "system is within a few percent of optimal." Science is hard when words don't have any meaning.

That's why people get very upset when system documentation redefines terms. This is especially true for words like "consistency" which have both a colloquial usage and a technical definition. System documentation should be written for a technical audience; any mixing and matching, especially when it can lead to misunderstandings, is unacceptable. A document that uses technical terms with their colloquial meanings is like a medieval leprosy patient mixing in with the general population; that document needs to shout UNCLEAN every few sentences. Such usage must be avoided at all costs, and, ever the optimist, I will ignore the possibility that the documents are purposefully written to mislead the audience, for surely those authors are destined for hellfire.

When someone uses pre-defined words, they implicitly import their connotation. For instance, the term "ACID transactions" has a very specific and well-defined connotation. And data stores that perform transactions on at most one object at a time come nowhere near that connotation. Technical terms mean something to people who know the area. The people coopting a phrase might perhaps not know the area, and that's totally ok, but they sure as hell know and are taking advantage of the positive connotation the word carries. Such behavior dumbs down the entire industry; bad science drives away good science, it also drives away the smart developers, and it even drives away business. And this kind of behavior will earn a well-deserved slap on the wrist. Why? Because the Followers of Principled Documentation are too nice to cut off hands.

Thou shalt specify performance expectations whenever possible.

Performance properties often get short shrift. It often matters to the user of an interface whether they can expect an operation to take a while, or whether it'll be fast. So it's invaluable to document such expectations.

One of my favorite theses of all time is Sharon Perl's PhD thesis from MIT. She built a system by which developers not only express their expectations of the performance of different API calls, but can convert that specification into performance unit tests.

Sadly, I know of no such open source tools available at this time, and expressing performance properties formally is still quite difficult. But simple English specifications of "this operation takes time linear in X" or "this operation may block until Y is completed" go a long way in letting developers use an API in an informed manner. The state of the art is so backwards at the moment that many developers will happily take, and thank you for, imprecise specifications of the expected case. Worst-case bounds are not necessary, though, of course, it helps if the system can provide them.

A few fully-defined parables are as good as many small detailed microspecifications.

There are two main ways of writing API specifications.

The first approach is to go interface by interface, call by call, and specify at the micro level the assumptions, functionality and the upheld invariants of each function. This provides a micro-level, bottom up description of the contract to which users can hold the system. Think of these as micro-theorems. Such micro-theorems are excellent for reference, but reasoning bottom-up from tiny factoids is difficult.

The second approach is to provide examples and tutorials. Think of tutorials as macro-theorems. Properly done, a tutorial states that "if you follow these steps, our system is obligated to produce the following output." Often, this is just as, and sometimes more, insightful than a slew of micro-specifications. A tutorial can provide coverage over wide swaths of code and pin the interface down in a way a thousand words cannot, as long as it is fully grounded. Snippets of free-floating, incomplete code, of course, are worthless. So are tutorials that fail to cover sufficiently interesting edge cases. Imagine a simple function that computes a quadratic equation. A tutorial that displays f(1) and f(2) is insufficient -- any number of functions can be fitted through those two points. If the documentation clearly states that this is a quadratic, a tutorial with three values in it fully defines the function. Similarly, a good tutorial will, when coupled with the rest of the documentation, illustrate so much of the system behavior that nothing will be left up to the imagination of the reader.

Neither of these approaches is superior to the other. Whenever possible, documentation should provide both. The HyperDex manual provides ample examples and tutorials in addition to documenting each API call. Every good CPU spec documents every single instruction in the ISA (bottom up) along with sample programs, say, for correct synchronization (top down). I don't even know if Django provides a bottom-up specification, as I have found the top-down tutorials to provide sufficient coverage. So, it's a folly to relegate the tutorials and examples to second-class status; they can be just as useful in establishing a contract.

Your users will make Cain look like a loving sibling if you delete their docs underneath them.

There shall be one true book. Thou shalt clarify and reissue documentation instead of creating countless less-than-holy texts.

Often, there is a mishmash of documents surrounding any software component. Whenever possible, a good software release will collect and gather them into a single one, true, internally consistent, "consistent cut" of documentation. The onus for this lies with the system developers. Even in the age of excellent search engines, it is not realistic to expect users to track and determine a consistent cut across an ever changing set of documents. This is especially true because so many non-Followers of Principled Documentation leave behind slews of out of date documents scattered on the web that no one can figure out where current, actionable information resides.

Thou shalt not kill old documentation.

That said, there are good reasons to leave behind clearly marked old documentation. Not everyone upgrades their software. Pulling old documentation off the web is a vengeful practice. You should mark the documentation as having been deprecated, but leave it behind for posterity.

We are our brogrammer's keepers. Let's hold them, gently but firmly, to higher standards for systems documentation and guide them through the valley of the shadow of marketing-speak to make the world a better place. It's either that, or jihad.